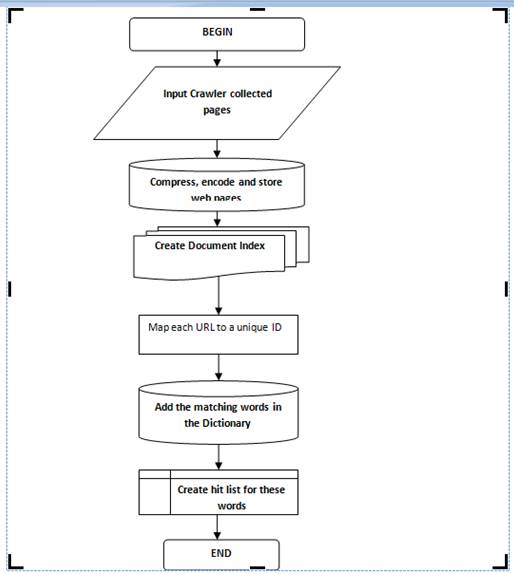

The Google and the other crawler search engines employ software called web crawler or spider to search and retrieve information from the web and prepare an index which contains a list of the popular keywords and the location they are found.

The usual starting points are lists of heavily used servers and very popular pages known as the seed pages or seed URL.

The spider will begin with a popular site extracting words, indexing the words on its pages and following every link found within the site.

In this way, the spider or crawler quickly begins to travel, spreading out across the most widely used portions of the Web.

The process through which Google servers crawl the web is as follows:-

- 1. When the user requests Google to search for a word or a group of words, first it checks up its own database to see that the page is available or not.

- 2. If the page is not available in the database the spider program is activated.

- 3. When the Google spider looked at an HTML page, it searches the words within the page and Where the words were found.

- 4. Words occurring in the title, subtitles, Meta tags and other positions of relative importance were noted for special consideration during a subsequent user search.

- 5. The Google spider was built to index every significant word on a page, leaving out the articles “a,” “an” and “the.” Other spiders take different approaches.

- 6. The first thing a spider is supposed to do when it visits a website is look for a file called “robots.txt”. This file contains instructions for the spider on which parts of the website to index, and which parts to ignore.

- 7. These different approaches usually attempt to make the spider operate faster, allow users to search more efficiently, or both.

- 8. For example, some spiders will keep track of the words in the title, sub-headings and links, along with the 100 most frequently used words on the page and each word in the first 20 lines of text.